- Joined

- Jun 22, 2008

- Messages

- 6,368

- Reaction score

- 0

- Points

- 0

Generally people who make more understated predictions end up right, and generally people who make overstated predictions end up wrong, save for an interesting twist of reality.

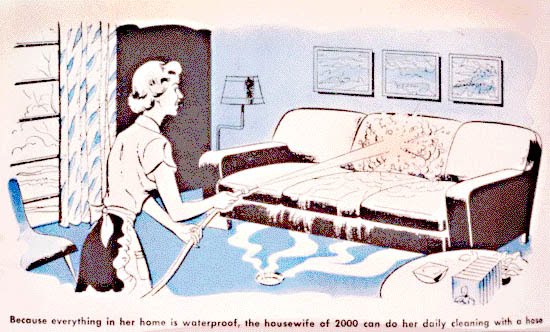

Today there are many nuclear powerplants running in the world. Do we have nuclear powered cars and aircraft? No. Today there are ongoing manned space programs and we've flown to the Moon. Do we have weekly transport services to Mare Tranquilitatis? No. Today there are innumerable amazing devices used in daily life. Did the housewife of 2000 do her daily cleaning with a hose? Of course not.

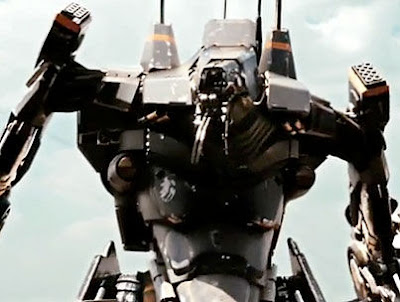

Personally I find people underestimate future advances when they go on about things such as humanoid robots and such; it's a view limited by an old science-fiction trope. We already have robots that do everything from mow lawns to build cars to explore other planets. The problem with these robots is that their functions are limited; they're limited by their specific programming.

Now imagine a generalist AI program- and I'm not talking "artificial person" here, I'm talking an AI software program. We already have AI in a lot of computer applications, but it's mostly limited to one thing or another. Now if you had an AI framework- you might call it a "Strong AI", you could adapt it to various different situations and you could get it to do various different things. Place this in an automaton body- any sort of machinery- and you now have an insanely powerful system.

Why do these robots have to look anything like humans? Of course they don't. They can be anything. They can be intelligent water delivery systems. Or CNC machines. Or lawnmower/vacuum cleaner/drink-server hybrids. Such systems could assist greatly in the operation of motor vehicles, aircraft and watercraft. Or an inbuilt general intelligence program could greatly assist people searching the internet.

Such a system could revolutionise mechanical design work and social planning. Now imagine that such a program could be configured to design at least parts of its future versions; it's already happening, in a sense: compilers can be used to compile the code of their future versions.

And then you have an immensely important technological revolution. A singularity. Not the namby-pamby cyberpunk fairytale singularity that people keep going on about, but a far more sensible and realistic revolution. We had the agricultural revolution, then the industrial revolution, we're having the information revolution (which, despite its massive impact, people have an odd tendancy to ignore), and this would be the intelligence revolution.

Who predicted the internet? Moreover, who predicted that the internet would be as immensely influential as it is now? 2001 gave us HAL 9000, but no internet. I know which one I'd pick, and not because of the former's tendency to kill crews en-route to Jupiter...

There are two problems here though, and the first is that software development doesn't coincide with hardware development. Just because we've got Moore's Law going doesn't mean we could make a really powerful generalist intelligence in 20 years, or 50 years, or maybe even 100 years. And it works both ways; we may in all entirety be able to make a generalist intelligence with the computing technology of today. It might not be a hardware problem at all, but just a "don't exactly know how" problem (such problems are, historically, a grand limitation to technology). Maybe "artificial person" research is stifling the development of actual practical software.

The other problem is that a robot can't really understand a human, unless it effectively is human. And when you are creating sapient entities to do your bidding, you are not only practicing slavery, you are practicing something far worse.

Exactly; it isn't like human psychology was pulled out of the collective fundament of the universe; it's all set up as a means of survival.

While a sapient alien species may have a psychology that is as alien to us as their appearance, it is almost certain that it will converge on ours in many ways- just as their body plan converges with ours in having features such as legs, eyes, mouthes, support structures, etc. They have to do a lot of things we do (or did) too, it only makes sense that their psychology would be similar to ours.

The best option for studying alien psychology is probably to look at other intelligent organisms on Earth; elephants, cetaceans and certain bird species (we can toss out apes, as well, we're apes and we can only expect our nearest relatives to have similarities to us). Despite all of them being very different from eachother, they have key similarities.

Somehow I feel that a African Grey raised by a robot would turn just as bad as a human raised by a robot. It'd probably turn out better if it were raised by a human(s)...

Today there are many nuclear powerplants running in the world. Do we have nuclear powered cars and aircraft? No. Today there are ongoing manned space programs and we've flown to the Moon. Do we have weekly transport services to Mare Tranquilitatis? No. Today there are innumerable amazing devices used in daily life. Did the housewife of 2000 do her daily cleaning with a hose? Of course not.

Personally I find people underestimate future advances when they go on about things such as humanoid robots and such; it's a view limited by an old science-fiction trope. We already have robots that do everything from mow lawns to build cars to explore other planets. The problem with these robots is that their functions are limited; they're limited by their specific programming.

Now imagine a generalist AI program- and I'm not talking "artificial person" here, I'm talking an AI software program. We already have AI in a lot of computer applications, but it's mostly limited to one thing or another. Now if you had an AI framework- you might call it a "Strong AI", you could adapt it to various different situations and you could get it to do various different things. Place this in an automaton body- any sort of machinery- and you now have an insanely powerful system.

Why do these robots have to look anything like humans? Of course they don't. They can be anything. They can be intelligent water delivery systems. Or CNC machines. Or lawnmower/vacuum cleaner/drink-server hybrids. Such systems could assist greatly in the operation of motor vehicles, aircraft and watercraft. Or an inbuilt general intelligence program could greatly assist people searching the internet.

Such a system could revolutionise mechanical design work and social planning. Now imagine that such a program could be configured to design at least parts of its future versions; it's already happening, in a sense: compilers can be used to compile the code of their future versions.

And then you have an immensely important technological revolution. A singularity. Not the namby-pamby cyberpunk fairytale singularity that people keep going on about, but a far more sensible and realistic revolution. We had the agricultural revolution, then the industrial revolution, we're having the information revolution (which, despite its massive impact, people have an odd tendancy to ignore), and this would be the intelligence revolution.

Who predicted the internet? Moreover, who predicted that the internet would be as immensely influential as it is now? 2001 gave us HAL 9000, but no internet. I know which one I'd pick, and not because of the former's tendency to kill crews en-route to Jupiter...

There are two problems here though, and the first is that software development doesn't coincide with hardware development. Just because we've got Moore's Law going doesn't mean we could make a really powerful generalist intelligence in 20 years, or 50 years, or maybe even 100 years. And it works both ways; we may in all entirety be able to make a generalist intelligence with the computing technology of today. It might not be a hardware problem at all, but just a "don't exactly know how" problem (such problems are, historically, a grand limitation to technology). Maybe "artificial person" research is stifling the development of actual practical software.

The other problem is that a robot can't really understand a human, unless it effectively is human. And when you are creating sapient entities to do your bidding, you are not only practicing slavery, you are practicing something far worse.

Then again, you're not talking about humans, so our psychology may not aply to whoever they are, but I dare say some psychological principals are almost universal, given that psychology is theorized to be formed along with the body during the evolutionary process.

Exactly; it isn't like human psychology was pulled out of the collective fundament of the universe; it's all set up as a means of survival.

While a sapient alien species may have a psychology that is as alien to us as their appearance, it is almost certain that it will converge on ours in many ways- just as their body plan converges with ours in having features such as legs, eyes, mouthes, support structures, etc. They have to do a lot of things we do (or did) too, it only makes sense that their psychology would be similar to ours.

The best option for studying alien psychology is probably to look at other intelligent organisms on Earth; elephants, cetaceans and certain bird species (we can toss out apes, as well, we're apes and we can only expect our nearest relatives to have similarities to us). Despite all of them being very different from eachother, they have key similarities.

Somehow I feel that a African Grey raised by a robot would turn just as bad as a human raised by a robot. It'd probably turn out better if it were raised by a human(s)...

Last edited: